-

Posts

204 -

Joined

-

Last visited

-

Days Won

7

Other groups

Github Contributors

InGame Verified

Members

Christasmurf last won the day on February 26 2023

Christasmurf had the most liked content!

About Christasmurf

- Birthday 07/20/2000

Personal Information

-

BYOND Account

christasmurf

Recent Profile Visitors

2,573 profile views

Christasmurf's Achievements

Chemist (8/37)

106

Reputation

-

Specific items that wouldn't/shouldn't be available - Anything telecomms related, syndicate researchers aren't meant to be contacting normal crew - Records console boards, comms console boards, AI core board, AI/cyborg upload boards etc - Mech tracking beacons/AI beacons - Robobrains and syndicate MMIs would need to be changed to syndicate versions to stop them syncing to the station AI and have them loyal to the syndicate that made them Additionally, IRCs produced by syndicate robotics MAY need extra information telling them they are bound to the same rules as normal researchers and cannot leave

-

Ripping limbs off and pulling organs out seems like a good idea. If you do enough damage to the chest, abdomen or head the organs can fall out if you're using a sharp weapon I believe. Although, if you aren't gibbing for non-permakill reasons, you'll want to make them either unable to remove the head/take organs from it or unable to actually eat the brain, as this is the only part that needs to be retained for people to be revived. The rest of the limbs and organs are entirely superfluous (well they need a heart and lungs but not for cloning)

-

Change the config option "non_repeating_maps" from false to true

Christasmurf replied to Bmon's topic in Suggestions

i want off mr box's wild ride -

Right, let's see here... First character was a lovely lady called Audrey, who then became Audrey Atkinson, and I think currently resides, unused as Audrey Goldsmith. First character I made, learning the ropes. Mute, because I made her that way after my first time playing as a mime, I would get job transfers to robotics to learn while still being a mime, eventually changing to just straight robotics and further progressing into medical due to how fun I found the surgery process. Went Mime > Roboticist > Surgeon > and then the rest of Medical, I believe, with the exception of CMO. Next up, we got Emily. Emily originally started out as a boring, grey IPC called E.M.I.L.E. (Didn't stand for anything). I played one shift as HoP, which was also my first shift as Captain, before realising I didn't like the emotionless paperwork robot. Due to being heavily depressed at the time, I decided to change from no emotions to one emotion - Happy! Fitted with an emotional inhibitor, E.M.I.L.E. got a pink paintjob and became E.M.I.L.Y., later evolving into Emily-5 who 'broke' her inhibitor to allow her to feel all emotions (I got salty), then finally stopping at Emily-7. Nowadays, I don't touch the character slot, but I do still use the name Emily for when I play AI. Went HoP > Captain > AI > (this is where stuff gets messy) CMO > RD > Engineer > CE > I think Warden goes here? Maybe Detective first > More Medical I think > NT Rep > Blueshield > Magistrate > AI again and I think this is where I left off (this list does not include any roles I played for a single shift only, nor does it include the rotation after Magi in which I just cycled between Captain, HoP, Magistrate, NT Rep and Blueshield on repeat for about a year. I played almost every single role as Emily. Cargo was at some point but I don't remember the timeline.) At some point during the long, seemingly unending Emily saga, I unlocked slime people. (oh god oh fuck she powergaming) Firstly, I made Domino. A simple, mute slimegirl (to replace Audrey who I made not-mute). Not a very big list here. Bartender > Mechanic > Station Engineer And then I made Sunrise. A germaphobe, an interesting roleplay challenge. Pretty much the same personality as the rest of my characters (I am bad at roleplaying). Mostly just cycling between Command roles, occasionally switching it up to play Security or Internal Affairs. Usually playing as HoP, if anything. Played a few rounds as Medical Doctor but given the germaphobe thing, I would only do first aid and even then I wouldn't do much in terms of that. Also a fan of Coroner. I don't really remember the specific order but it's something like Security Officer > Security Pod Pilot > Warden > HoS > Detective > Brig Physician > Magistrate > NT Rep > Captain > HoP I need to learn atmos at some point maybe probably who knows

-

Reach the highest number without an admin posting

Christasmurf replied to Mrs Dobbins's topic in Civilian's Days

just here to put a stop to this before it can even begin literally1984.mp4 -

Reach the highest number without an admin posting

Christasmurf replied to Mrs Dobbins's topic in Civilian's Days

kill it again -

Christasmurf changed their profile photo

-

Reach the highest number without an admin posting

Christasmurf replied to Mrs Dobbins's topic in Civilian's Days

three let's go -

Reach the highest number without an admin posting

Christasmurf replied to Mrs Dobbins's topic in Civilian's Days

wait... i can reset this now... -

Yes, I'd like the giant blue one that is impossible to carry, thank you Very good stuff

-

Is it egotistical to say she's beautiful? Because she is.

-

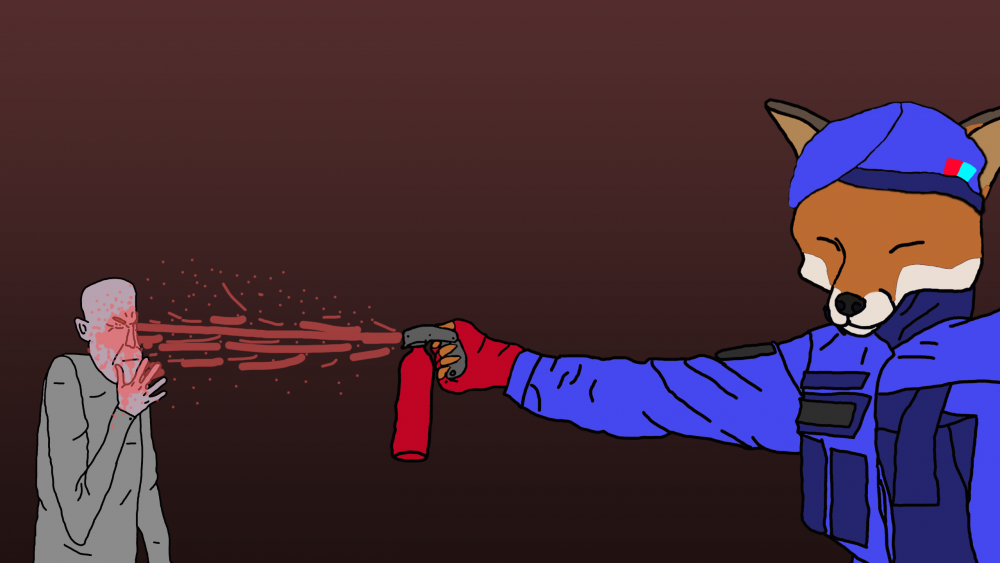

Warden spraying a poor greytide who accidentally wandered into brig Also I don't know how to draw vulps so have a fox with an awkward beret

-

Reach the highest number without an admin posting

Christasmurf replied to Mrs Dobbins's topic in Civilian's Days

Quick let's keep going further before they find rules on this or something 5000 -

-

I have no idea where to even start for borg stuff tbh